The Computer Divide

by Andrew Boyd

Today, two roads. The University of Houston's College of Engineering presents this series about the machines that make our civilization run, and the people whose ingenuity created them.

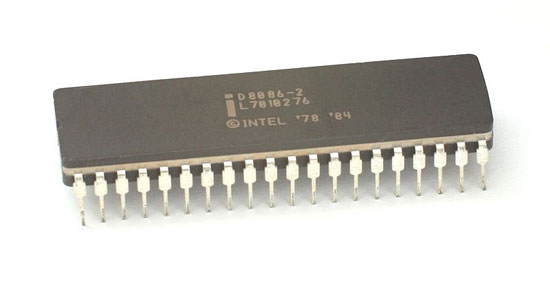

The year 1978 was a landmark in the history of computing. It was the year Intel produced a processor whose descendants would dominate computing to this day: the not-so-elegantly-named 8086.

Processors are tightly intertwined with their instruction sets — commands that tell the processor what to do. An extension of the 8086 instruction set burst onto the scene inside the earliest PCs from IBM. From that point on there was no looking back. New, more powerful PC processors would emerge, but all were built on top of the 8086 instruction set. New, more complex commands were included as the instruction set matured.

Bright as the future looked for Intel and its followers, competition lurked just over the horizon. In the early 1980s academic research challenged the notion that more was better. Instead of introducing ever more complicated instructions, researchers advocated the opposite. If an instruction set had simpler instructions, but worked very efficiently with a processor, computers would get faster. The name given to this approach went by the acronym RISC, for Reduced Instruction Set Computing.

And here's where the story takes a turn. Among the companies experimenting with RISC was Acorn Computers, a small firm based in Cambridge, England. Sophie Wilson, who worked at Acorn, designed a new RISC instruction set. By the mid 1980s the company had a processor to go with it. Acorn was ready to take on the market for PCs.

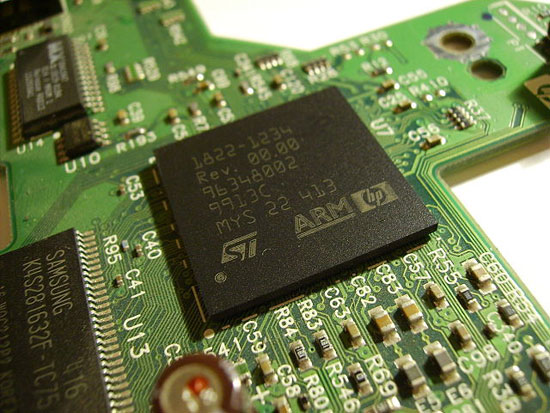

Unfortunately, that's not how things worked out. But fortuitously, Acorn began working with none other than Apple Computers. Apple wanted a processor for its newest gadget, the Newton — a forerunner of the iPad. The Newton flopped — that's another story. But the instruction set didn't, because RISC processors used far less power than alternatives. And that made them ideal for mobile devices — phones, tablets, audio players, you name it.

ARM processor

So in 1990 Apple led a consortium including Acorn that spun off a new company, Advanced RISC Machines, known today simply as ARM. The technology was right, and the world of mobile devices is now dominated by processors using the ARM instruction set.

But PCs, laptops, and most of the non-mobile world are still dominated by extensions of Intel's old instruction set. It's still around because it does a pretty good job, and because the computing ecosystem is hopelessly tangled in its roots. But 8086 descendants tend to draw a lot of power. Perhaps ARM technology is set to dethrone the royal lineage of the 8086. Or perhaps not. Perhaps the two technologies will converge. But with so much money on the line, it's certain to be a clash of powerful interests.

I'm Andy Boyd at the University of Houston, where we're interested in the way inventive minds work.

(Theme music)

Notes and references:

ARM Architecture. From the Wikipedia website: https://en.wikipedia.org/wiki/ARM_architecture. Accessed February 26, 2013.

History of ARM: From Acorn to Apple. From the Telegraph website: http://www.telegraph.co.uk/finance/newsbysector/epic/arm/8243162/History-of-ARM-from-Acorn-to-Apple.html. Accessed February 26, 2013.

Intel 8086. From the Wikipedia website: https://en.wikipedia.org/wiki/Intel_8086. Accessed February 26, 2013.

x86. From the Wikipedia website: https://en.wikipedia.org/wiki/X86. Accessed February 26, 2013.

All pictures — the 8086 microprocessor, ARM processor and the IBM PC — are from Wikimedia Commons.

This episode was first aired on February 28, 2013.