Backpropagation

Today, backpropagation, the trick behind modern AI. The University of Houston presents this series about the machines that make our civilization run, and the people whose ingenuity created them.

______________________

Early pioneers built the first artificial neural networks in the 1950s and ’60s. These systems of simple computing units were wired together in imitation of the brain. They could learn to recognize simple patterns, and researchers suspected they were capable of much more. But there was a catch: nobody knew how to teach them efficiently.

Imagine a network with millions of adjustable knobs. Each knob controls how strongly one artificial neuron influences another. To teach the network to, say, recognize a camel, you need to find the right setting for every single knob. Turning one knob affects everything downstream. How do you figure out which knobs to turn, and by how much?

The answer involves something I teach in calculus – the chain rule. Yes, calculus is useful! The chain rule tells you how turning one knob affects other neurons through a chain of dependencies. But applying the chain rule to neural networks with millions or billions of knobs is like tracking every ripple in an ocean – theoretically possible, but overwhelming.

Many researchers independently discovered the same elegant solution to this problem, a method we now call backpropagation. But it was David Rumelhart, Geoffrey Hinton, and Ronald Williams who demonstrated its power in 1986. The idea is to work backwards: compare the network’s output to the correct answer, then trace the error back through the network, adjusting each knob to reduce the mistake. Repeat this process thousands of times, and the network learns.

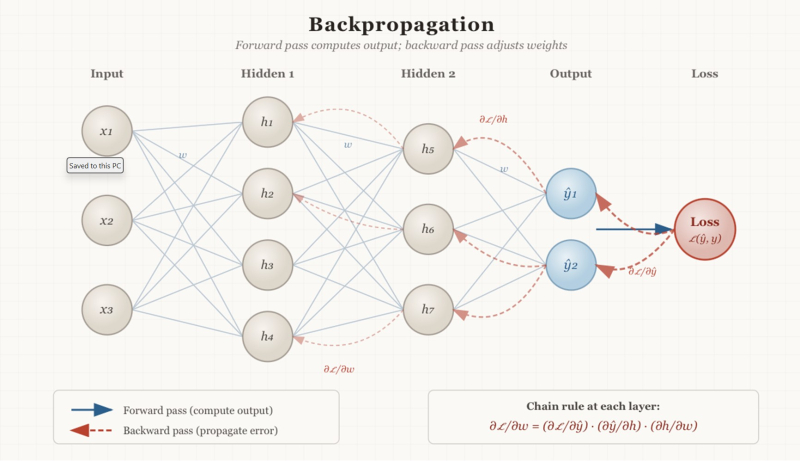

A schematic illustration of the backpropagation algorithm: An input is processed

by an artificial neural network consisting of several layers. The output of the network is

compared to the desired output. If the two differ, the error is propagated backwards to

adjust the weights ("knobs" in the text) to produce a better match. Image generated by Claude.

Despite this breakthrough, neural networks fell out of fashion in the late 1980s. They were hard to train and became overshadowed by expert systems – a rival technology that seemed more promising. Funding dried up, and research entered yet another “AI winter.” But diehards like Hinton refused to give up. His own PhD supervisor had told him to drop neural networks before they ruined his career. Hinton kept going anyway.

The 2000s brought the ingredients neural networks desperately needed: faster processors, graphics cards that could parallelize calculations, and vast amounts of data from the internet. In 2012, AlexNet, a deep neural network developed by Hinton’s group, stunned the field by resoundingly winning an image recognition competition. Computers could finally tell cats from dogs reliably.

This result launched the current AI revolution. Today’s large language models and image generators are all trained using the same backpropagation algorithm Hinton and others perfected decades earlier. In 2024, Hinton shared the Nobel Prize in Physics for his foundational contributions to machine learning.

This is a profoundly human story about brilliant eccentrics and stubborn optimists who kept going until the world finally caught up with their vision. Surprisingly, an application of the chain rule is set to shape our world for decades to come.

This is Krešo Josić at the University of Houston, where we're interested in the way inventive minds work.

(Theme music)

You can find many explanations of the backpropagation algorithm on the web.

Here is a high-level description from IBM.

Here is a more detailed one that should be understandable to anyone who has taken a college course in calculus.

This Episode first aired February 25, 2026.