Chatterbots

by Andrew Boyd

Today, we chat. The University of Houston's College of Engineering presents this series about the machines that make our civilization run, and the people whose ingenuity created them.

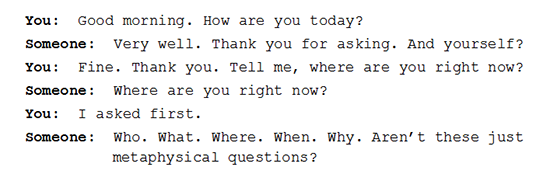

Imagine having a five minute, typed conversation with someone in another room. Your challenge: determine if the 'someone' is a human or a computer. How would you go about it? What questions would you ask?

While you're thinking about that, let's look at the flip side of the coin. If you were a gifted programmer, could you create a program that responded as if it were human? Could you fool all of the people some of the time? Some of the people all of the time?

The challenge hovers around the question of whether computers can think, but really sidesteps it. Instead, it asks if computers can interact with us in such a way that we believe they can think. The challenge is called the Turing test after computational pioneer Alan Turing, and it continues to befuddle the artificial intelligence community to this day.

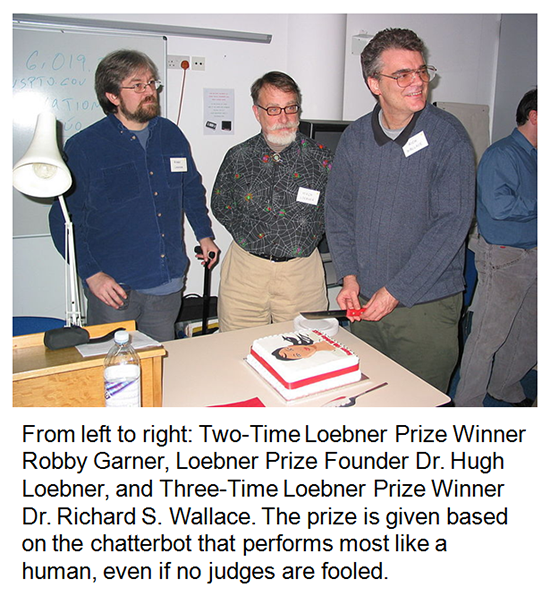

But for creative minds, befuddlement is a source of inspiration. And that's why enthusiasts line up to participate in the annual competition for the Loebner Prize. The Loebner Prize pits humans against specialized computer programs called — get this — chatterbots to see who's the most, well, human. Each judge carries on a typed conversation with two competitors, one human, the other a chatterbot. The judge's task: decide which is which.

Photo by Ulrike Spierling

Computers are pretty good at facts — generally better than we are. But they have a lot of trouble with something we take for granted: the give and take of natural conversation. "What's the family up to?" or "How about those Astros?" Easy questions for us, but they can leave computers scratching their heads — figuratively, of course.

All this adds up to chatterbots that aren't very good at chatting. Judges have been fooled, but only on rare occasions. And even as the chatterbots grow more and more sophisticated, they seem to do worse and worse in competition.

Why? It's not just the chatterbots that've gotten better. The humans have, too. Judges have learned what computers have trouble with and ask better questions. And the humans who pair off with their chatterbot adversaries, motivated by the chance to win the 'most human human' prize, have learned how to better communicate their humanness. Joking with the judges, discovering shared likes and dislikes. We humans didn't just start with the advantage of being human. When it comes to playing the game, we're improving faster than our mechanical challengers.

The game-like nature of the Loebner competition doesn't sit well with many researchers, who view the event as more spectacle than science. Chatterbots are only a tiny part of artificial intelligence, and their poor performance doesn't reflect well on the field as a whole. Still, it's nice to know that, for the time being, we remain victorious when confronted by our computer overlords, at least when it comes to a real and uniquely human stronghold: the ability to chat.

I'm Andy Boyd at the University of Houston, where we're interested in the way inventive minds work.

(Theme music)

For related episodes, see ELIZA and ALAN TURING.

Many different chatterbots are available online including A.L.I.C.E. and Jabberwacky, which can be found at http://alice.pandorabots.com/ and http://www.jabberwacky.com/, respectively. All sites accessed September 10, 2013.

B. Christian. "Mind vs. Machine." The Atlantic, March, 2001. See also: http://www.theatlantic.com/magazine/archive/2011/03/mind-vs-machine/308386/. Accessed September 10, 2013.

The picture of the three men is from Wikimedia Commons. The remaining picture is by E. A. Boyd.

This episode first aired on September 12, 2013.