Bits, Nibbles, and Bytes

by Andrew Boyd

Today, bits, nibbles, and bytes. The University of Houston's College of Engineering presents this series about the machines that make our civilization run, and the people whose ingenuity created them.

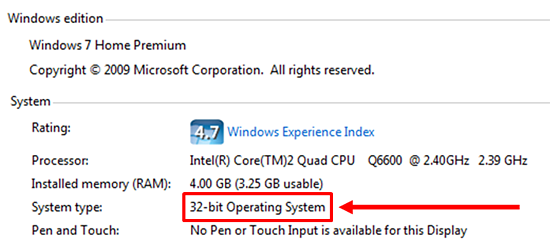

If you've purchased a laptop lately, you've likely been deluged with an array of technical details, like "number of bits." Thirty-two is pass. Sixty-four is all the rage. Why should we care?

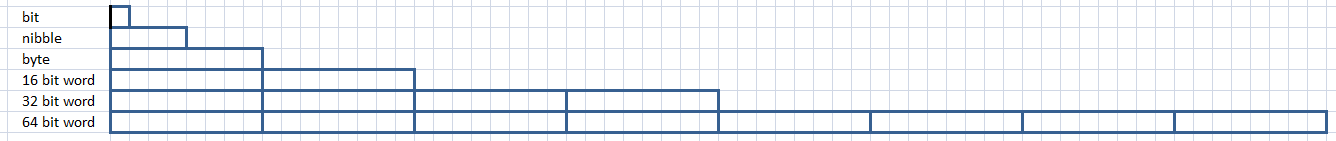

For one thing, the story provides an entree into some of the world's best computer jargon. A bit, or binary digit, is the electronic equivalent of a 0 or 1. But a computer working with individual bits would be like you and me carrying jars of pennies around. Bits are so small they're inconvenient to work with.

So computers work with packages of bits. Computer scientists call these packages words. The most well-known word size is a byte, which is a package of 8 bits. When the first commercial processors came on the market a byte seemed like a pretty good size, in part because it was perfect for packing keyboard characters — the letter k or the semi-colon. Bigger things had to be placed in more than one package, things like counting numbers over 255. Packing into more than one package slowed things down, but if packages were too big they'd often carry costly wasted space.

Some early computers worked with packages as small as 4 bits — half a byte. In computer-speak, that's a nibble. Bytes or nibbles, they were shuttled around inside a computer on a bus.

Thanks to rapid advancements in technology, the 1970s saw a relatively quick succession from 8 to 16 to 32 bit packages. Then things slowed just a bit. But in the early twenty-first century there was again a push for bigger packages, this time 64 bits. Why the change?

Among the important items carried in computer packages are addresses. Computer addresses are like our home addresses. They contain information about where other packages are supposed to go. For a long time, 32 bit packages held all the addresses we needed. But as our computers got bigger, we were running out. Thirty-two bits translates to about a billion addresses. Sixty-four gives us a billion times a billion.

Will we ever need that many? And why jump from 32 to 64 bits? Interestingly, there's not an especially good answer. In fact, computers have been designed using packages with all number of bits over the years. But 4, 8, 16, 32, and 64 predominate because they're special. They're not just double the preceding number; they're all powers of 2: numbers that breathe digital life into a computer. A jump from 32 to anything less than 64 would fail for aesthetic reasons.

And many people believe that within few decades a billion times a billion won't be big enough. Now that's something to noodle on.

I'm Andy Boyd at the University of Houston, where we're interested in the way inventive minds work.

(Theme music)

Notes and references:

Computers have been designed around different word lengths ("package sizes") since the advent of computing, and many different lengths are in use today depending on the application (at the moment, 32 bit architectures remain common in mobile devices). The progression described here loosely correlates with the x86 instruction set architecture which, among other things, dominates the PC market.

Many factors other than aesthetics have contributed to the choice of 64 bits. Among those factors are Moore's observation about the growth of memory, and a variety of practical simplifications related to the transition from 32 bits. However, as stated in the essay, 64 isn't a necessary choice for any scientific reason.

Most of this essay was based on the author's personal knowledge of computers. The following links are useful for individuals wanting to learn more.

64 Bit Computing. From the Wikipedia website: https://en.wikipedia.org/wiki/64-bit_computing. Accessed February 19, 2013.

Word (Computer Architecture). From the Wikipedia website: https://en.wikipedia.org/wiki/Word_%28computer_architecture%29. Accessed February 19, 2013.

All pictures by E. A. Boyd.

This episode was first aired on February 20, 2013.